Growth Lab

Growth Lab is currently in alpha. We'd love your feedback while we refine the experience and fill in the missing pieces.

Growth Lab is Q's page type for running structured growth work. Instead of tracking ideas in loose notes, you organize them by channel and turn them into experiments with a lifecycle, optional funnel stage, and structured learnings.

This is super helpful for vibe coders just starting with marketing or small teams that want to track their experiments.

Use it when you want to answer questions like:

- Which marketing channels are actually working for me?

- What have we already tested?

- Where are we under/over-testing some funnel stage?

- What should we run next?

Core Concepts

Channels

A channel is a source of distribution or demand generation you want to learn about, such as SEO, Reddit, Product Hunt, cold email, LinkedIn, partnerships, or paid ads.

Each channel has:

| Field | Description |

|---|---|

| Channel ID | Auto-numbered as CH-001, CH-002, etc. |

| Name | The human-readable channel name |

| Status | Your current confidence in the channel |

| Category | Optional grouping like content, community, outbound, paid |

| Description | Optional notes about why the channel matters |

Channel Status

Channel status helps you think at the channel level, not just the experiment level.

| Status | Meaning |

|---|---|

untested | You have not run a meaningful experiment yet |

testing | You are actively learning whether the channel has promise |

promising | Early results look encouraging, but not yet strong enough to declare a win |

winner | The channel consistently produces signal and deserves more investment |

killed | The channel is currently not worth further effort |

Keep status conservative. Most channels should spend a while in testing or

promising before becoming winner.

Experiments

An experiment is a concrete test inside a channel. Examples:

- "Post 3 founder-story threads on LinkedIn"

- "Launch a comparison landing page for competitor X"

- "Send 50 cold emails to design agencies"

Each experiment has:

| Field | Description |

|---|---|

| Experiment ID | Auto-numbered with your lab prefix, e.g. EXP-0001 |

| Title | The name of the test |

| Channel | Which channel it belongs to |

| Implementation Phase | Where the experiment is in execution |

| Verdict | Optional outcome after review |

| Funnel Stage | Optional AARRR tag |

| ICE Score | Optional prioritization score |

| Body | A Markdown page with hypothesis, design, results, ROI, learnings, and next steps |

Implementation Phase

Implementation phase tracks execution progress:

| Phase | Meaning |

|---|---|

idea | Worth considering, but not designed yet |

designing | You are shaping the test, assets, audience, and success criteria |

running | The experiment is live |

analyzing | The experiment finished and you are reviewing the data |

complete | The experiment has a final verdict and documented learnings |

Verdict

Verdicts are only for completed or near-complete experiments:

| Verdict | Meaning |

|---|---|

validated | The hypothesis held up strongly enough to keep investing |

invalidated | The test failed or the underlying assumption was wrong |

inconclusive | You learned something, but the signal was too weak or noisy to decide |

AARRR Funnel

Growth Lab uses the AARRR funnel as an optional classification for experiments.

AARRR stands for:

| Stage | Meaning | Typical question |

|---|---|---|

| Acquisition | How people discover you | Are we getting qualified attention? |

| Activation | How people reach first value | Are new users having the "aha" moment? |

| Retention | How people keep coming back | Are users forming a habit? |

| Revenue | How value turns into money | Are we converting attention into paid usage? |

| Referral | How users bring others | Are happy users creating new demand? |

You do not need to assign a funnel stage to every experiment, but doing so makes the funnel coverage map much more useful.

ICE Score

ICE is a lightweight prioritization framework:

| Part | Meaning |

|---|---|

| Impact | If this works, how much upside is there? |

| Confidence | How strongly do you believe the hypothesis? |

| Ease | How easy is it to ship and measure? |

Q stores the three values separately and computes the total as:

impact × confidence × ease

Use ICE to compare what to run next, not as a source of truth. A lower-score experiment can still matter if it closes a major learning gap.

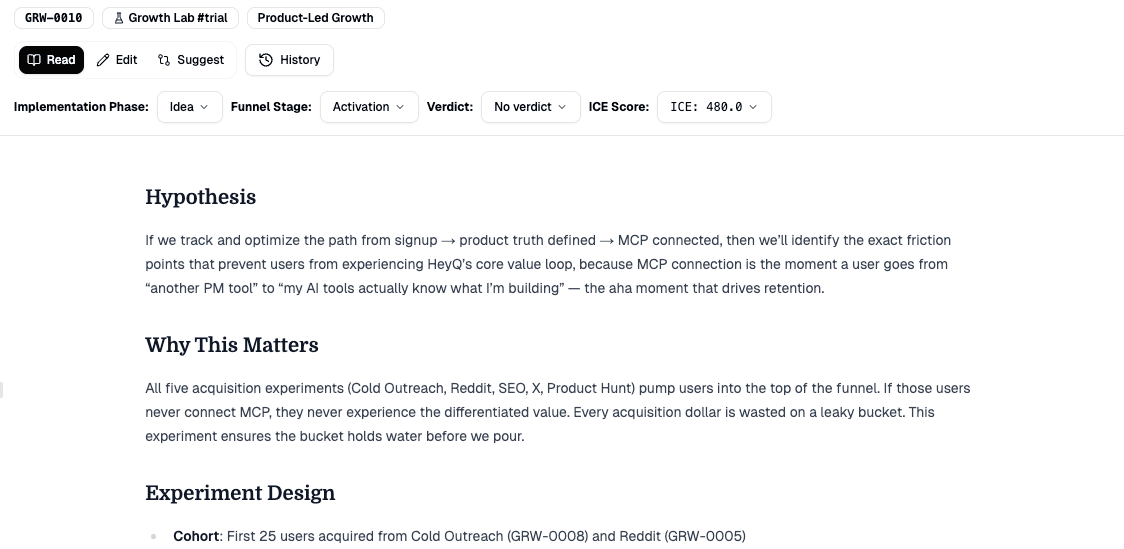

Experiment Body

Each experiment opens like a normal Markdown page, but the default structure is designed for the full learning loop:

- Hypothesis

- Experiment Design

- Results

- ROI

- Learnings

- Next Steps

This matters because Growth Lab is not just a backlog. It is a memory system for what you tried, what happened, and what should happen next.

Toolbar And UI

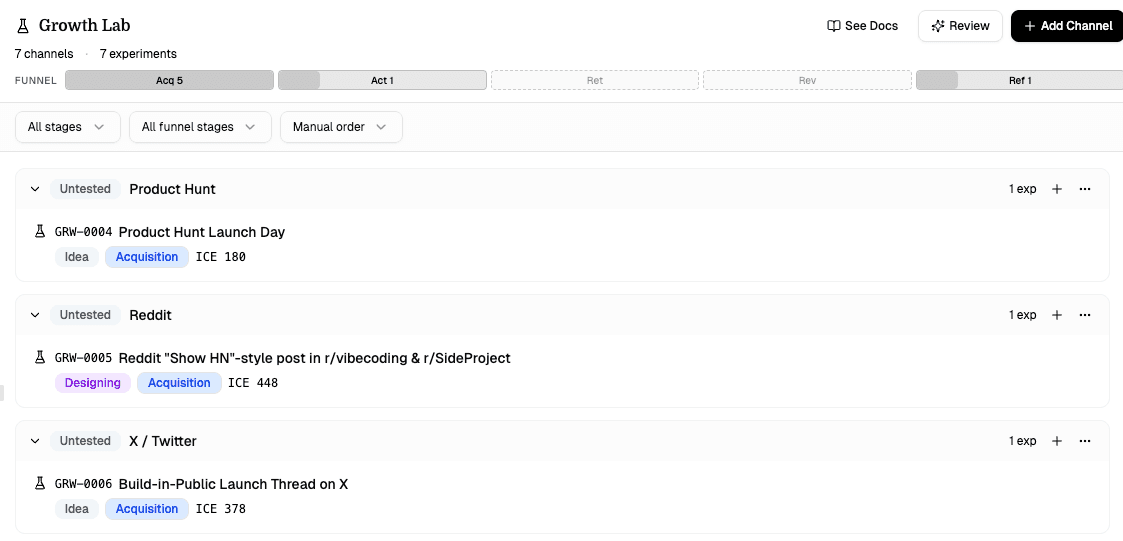

The Growth Lab toolbar gives you a quick read on the state of your system:

| Element | What it does |

|---|---|

| Review | Opens Sidebar AI with a Growth Review prompt based on the full lab |

| See Docs | Opens this documentation page |

| Add Channel | Creates a new channel |

| Stats row | Shows total channels, total experiments, and highlighted channel statuses |

| Funnel coverage map | Visual count of experiments across AARRR stages |

Filtering And Sorting

Growth Lab supports client-side filtering and sorting so you can inspect the board from different angles:

- Filter by implementation phase

- Filter by funnel stage

- Sort experiments by default order, ICE score, or stage

- Reorder channels based on the active sort mode

This makes it easier to answer questions like:

- Which high-ICE experiments should we run next?

- Which channels currently have the strongest active work?

- Are we only testing acquisition and ignoring retention?

AI Integration

Growth Lab is deeply integrated with Sidebar AI:

- AI can create channels

- AI can create experiments

- AI can update experiment metadata

- AI Growth Review can summarize patterns, gaps, and next bets

- AI is prompted to consider existing project pages before suggesting new growth work

If you enable Growth Lab summary access in an MCP token, external AI tools can receive a structured lab summary in project context instead of raw JSON.

How To Use It Well

Start with a few channels, not every possible channel

Pick the channels that are most plausible for your current product, audience, and founder strengths.

Create small experiments, not vague goals

A good experiment is concrete, measurable, and cheap enough to run within a short cycle.

Tag experiments with AARRR when possible

This gives you a view of funnel coverage instead of just channel activity.

Write learnings even when the result is negative

Invalidated experiments are valuable if they stop you from repeating bad bets.

Promote or kill channels deliberately

Do not let stale channels linger forever in testing.

Creating A Growth Lab

Open Page Manager, choose Special, and add Growth Lab. Each project can have one Growth Lab.

You can also use the templates tab to create supporting strategy pages like:

- Positioning

- Audience

- Messaging

- Channel Strategy

These work especially well alongside Growth Lab because they capture the strategic layer, while experiments capture the execution layer.

Troubleshooting

I don't see Growth Lab in the add-page flow

Growth Lab lives under Special in Page Manager, not under From Scratch.

The funnel map looks empty

The funnel coverage map only lights up when at least one experiment has a funnel stage assigned.

Review opens AI, but the response is weak

Make sure experiments have meaningful bodies and that completed experiments include results, ROI, and learnings. The review is much better when the lab contains actual evidence instead of placeholders.